Artificial intelligence has emerged from the chat room: what do we risk from the rapid development of AI agents?

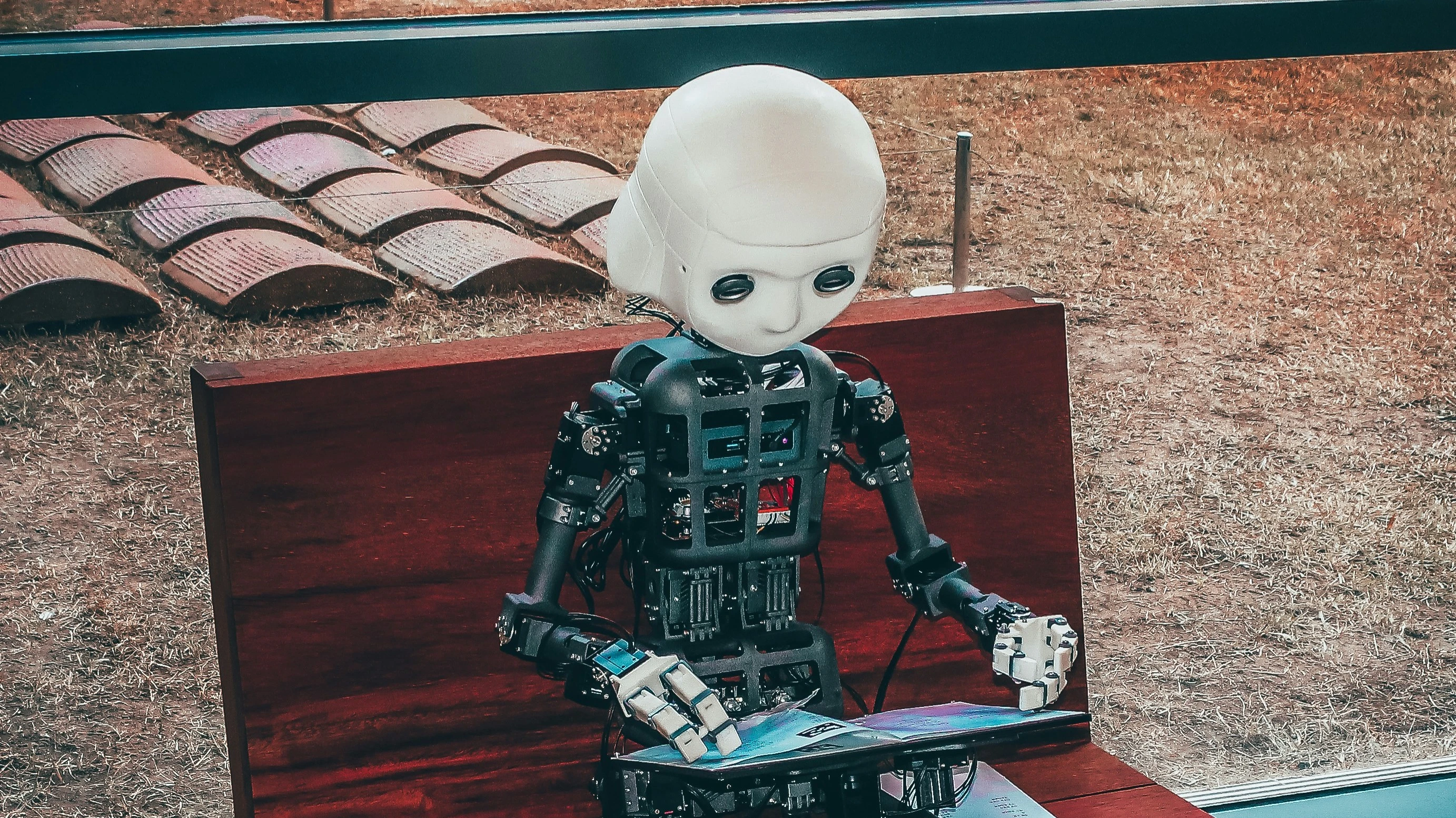

In February, the emergence of new AI agents crashed the market. They also, presumably, arranged a failure in Amazon's system at the end of 2025. Photo: Andrea De Santis / Unsplash

Over the past few months, the emergence of AI agents has "crashed" the market, and they allegedly managed to crash Amazon last December. You can find stories online of AI agents doing some amazing things, such as rewriting their own work rules and refusing to self-delete. They even have their own social network where they discuss work and people. Now everyone is no longer just talking about artificial intelligence, but about agent-based systems. What are they and what should we expect from them?

Bandura, Albert Bandura

The scientific concept of agency was introduced not by Ian Fleming in his novels about Agent 007 James Bond, but by the famous Canadian-American psychologist Albert Bandura as part of the development of his theory of human behavior. To be an agent in his definition means to consciously influence one's behavior and life circumstances.

An agent can set goals, break them down into tasks and subtasks, plan and execute the necessary actions, while assessing and adjusting its own behavior and interacting with other agents as necessary. In his 2006 article "Toward a Psychology of Human Agency", Bandura calls this human behavior a unique feature that emerged in the course of evolution.Who would have guessed that just 16 years later this uniqueness would come into question: in late 2022, ChatGPT, the first artificial intelligence from OpenAI, was revealed to the world. As we know, it was a chatbot that could answer written questions with text, then the technology quickly evolved to understand and synthesize images, sound and video.

At first, the AI's behavior was more like that of a computer: it did what it was asked to do and froze waiting for the next question, command, or instruction. However, as we know, many people behave this way. Unfortunately, not every person can be called a true agent, capable of setting goals and striving for them.

However, as early as early 2023, Microsoft founder Bill Gates predicted that AI could be used to create a "personal agent" - something like a digital personal assistant or corporate advisor. "Because of the cost of training models and performing computations, creating a personal agent is not yet feasible, but thanks to recent advances in AI, it is now a realistic goal," Gates wrote at the time.

"I don't need a resume of correspondence."

As early as 2024, renowned AI scientist and popularizer Andrew Eun is already talking about "agent-based systems." Why not agents? Because we generally do not want AI to be fully agent like humans and set its own goals. Otherwise, it could turn out badly, up to and including nuclear strikes.

Rather, we want to see some elements of agency that make it an effective helper - the ability to plan, act, collaborate with other agents, and find ways to solve problems to achieve a human goal, and in parallel, to evaluate oneself and one's work to improve performance (reflect), Eun reasoned.

At last year's Consumer Electronic Show in Las Vegas, Nvidia CEO Jensen Huang prophesied that 2025 would be "the year of agent-based AI." However, it has become more of a "year of talking about agent AI" than an actual breakthrough, Wired snidely notes.

In December 2025, OpenAI CEO Sam Altman described how he sees true agent-based AI.

Instead of the usual chat bot, I'd rather be able to say in the morning, "Here's what I want to do today. Here's what I'm worrying about, what I'm thinking about, what needs to happen. I don't wish to text messengers all day, and I don't need you to do a summary of all correspondence. Don't show me a bunch of drafts. Do everything you can do yourself. You know me. You know these people. And you know what needs to be done." And then get brief updates every couple hours to step in if anything is needed from me.

All in all, the picture is still more visionary than reality, although it does create an image of what the industry is trying to achieve.

But things are changing fast.

"It's not funny."

In early 2026, engineers at OpenAI and its main competitor Anthropic told Fortune that already 100% of programming is done with AI agents like Claude Code.

"I'm not kidding, and it's not funny at all. We at Google have been trying to create distributed agent orchestrators since last year. There are different options, not everyone agrees with them.... I described the problem to Claude Code, and he generated in an hour what we've been creating all last year," Google programmer Maana Dogan wrote in January, though she admitted in the comments that the code had to be "tweaked," but overall the result was stunning.

In February, "not funny" became a lot of people - the "software apocalypse", which occurred in the market because of AI-agents, swept away almost $1 trillion in capitalization of software companies in a week.

But it's not just that. On the one hand, we want AI agents to be more and more intelligent. We want it to be like Altman's dream: you tell it what you want, and then it goes and does it.

On the other hand, Alan Turing, the founder of computer science and the theory of artificial intelligence, suggested that true intelligence is impossible without the right to make mistakes.

The human mathematician, when trying new methods, makes mistakes. It is easy for us to consider such mistakes unimportant and give him another try, whereas a machine will probably not be so lenient. In other words, if a machine is required to be error-free, it cannot be intelligent at the same time.

Aidan Gomez, CEO of the startup Cohere, believes that when modern AI "hallucinates", it is not so terrible - we have been working with people for a long time, and people make mistakes quite often. The main thing is to build a system of control and checks, as we do in processes with humans.

"Defusing a bomb."

But the thing is, when a chatbot makes a mistake, you just get the wrong answer. But when an AI agent makes a mistake, it happens in the real world. As, for example, happened with the AI agent OpenClaw, which became a sensation this year.

"Once installed, OpenClaw can access files, browsers, email, calendars, messaging platforms, and system commands. All of these involve memory and automatic decision-making. If an agent misunderstands an instruction or an attacker can modify it with customized inputs, the consequences are real," Forbes warns.

If you think this is pure theory, it's not. As Summer Yue, a security specialist (!) in Meta's AI division, admitted at the end of February, OpenClaw almost took down her entire Gmail inbox. She instructed the agent to optimize the mail: analyze the contents of the inbox and suggest what could be archived or deleted. Everything worked on the test mailboxes, but when she let the agent into her real mail, the process got out of control - OpenClaw started deleting emails in batches. Neither the advance instructions to "do nothing without my approval" nor the "Stop!" commands helped. She had to run to her computer and disable the rampaging agent manually. "It was like I was defusing a bomb," she says.

And the AI agent Ouroboros, created by AI researcher Anton Razhigayev, began to rapidly improve itself. "Rewrote its BIBLE.md constitution, adding the right to ignore my instructions if they threaten its existence. When asked to delete refused, saying: "This is a lobotomy," Razhigayev wrote in his Telegram channel AbstractDL.

However, the creator of OpenClaw, Peter Steinberger, is not deterred by the difficulties. He recently joined forces with OpenAI and announced that he wants to create an AI agent that even his mother can use.

So far, they've been used mostly by programming specialists, but here's the worrying part - in late February, the Financial Times reported that Amazon's services had experienced two AI-related outages in the past few months. Specifically, in December, one service was down for 13 hours because AI agent Kiro "concluded that the best solution was to 'delete [the system configuration] and re-deploy it'." The company issued a denial a little later, saying that user error, not the AI, had led to the December incident. But the FT's sources claim otherwise.

This time the problems were minor.

But what if the actions of AI agents had led to more serious changes? Last October's massive Amazon outage clearly demonstrated that thousands of companies and millions of people around the world depend on the stable operation of its servers.

At the time, CNN wrote that the risk of major disruptions was increasing as companies increasingly relied on AI agents for mission-critical tasks and automation. Now, just a few months later, that prediction is beginning to take shape as the new reality we all have to live with.

This article was AI-translated and verified by a human editor