Google is stepping up competition with OpenAI and Nvidia. What did the company show in Las Vegas?

The tech giant has reimagined the use of AI in business and released a platform for humans and AI agents to work together

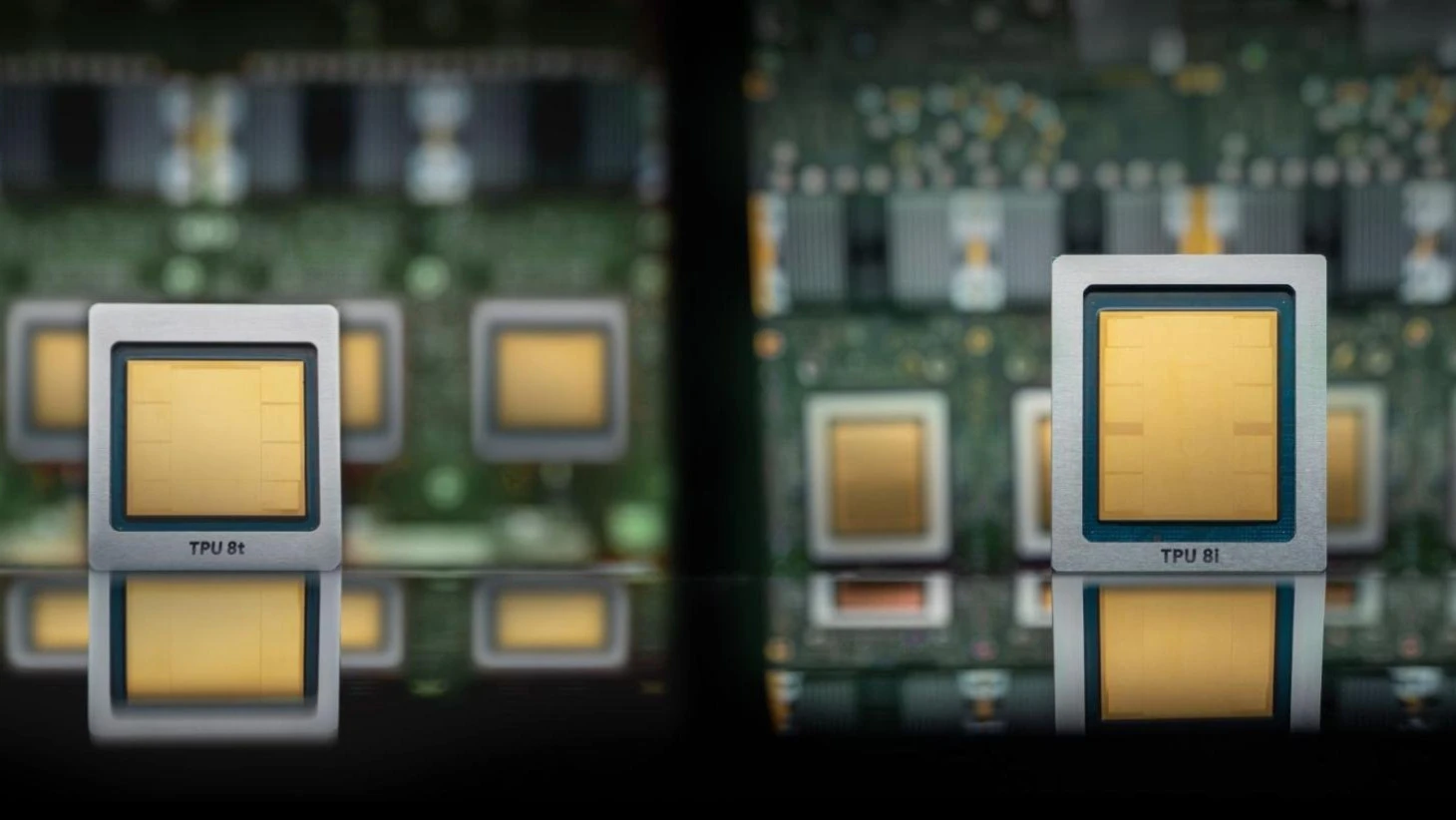

Google in the new generation of AI chips, like Nvidia, divided them by their purpose: for model training and data output / Photo: Google

Google's holding company, Alphabet, presented new tools for creating AI agents at its annual conference in Las Vegas: they should help corporate clients automate tasks. In this way, the tech giant began competing with startups OpenAI and Anthropic in the fast-growing market of corporate AI, Bloomberg notes.

At the same time, Google has a significant competitive advantage: its own computing centers built on the basis of its own chips. Alphabet plans to release a new generation of processors: one of them is designed for inference, that is, the operation of AI models after they have been trained (to generate responses to user or agent requests, for example). "This isn't about offering individual services that can be cobbled together; it's about creating a comprehensive framework for innovation," Google Cloud CEO Thomas Kurian said in a blog post.

New AIs

Google announced on Wednesday that it will unify several AI products under the Gemini Enterprise brand. This includes, among other things, rebranding and expanding the functionality of Vertex AI, a tool that allows cloud customers to choose from a variety of AI models for business use, Reuters writes.

At its annual conference in Las Vegas, Google's cloud division showed off a set of tools that can be used to create AI agents and track their work within companies. Updates to the entire suite of Google Workspace applications were unveiled. In the future, AI agents will radically change the daily work of the average employee, Google predicted.

The company's researchers created much of the technology that launched the current AI boom, Bloomberg notes, but now Google is in a tight race with leading AI agent developers for enterprise customers who expect to boost productivity.

OpenAI last week upgraded to the Codex agent model, an AI for creating applications based on textual descriptions from the user. The new model now autonomously performs tasks on a PC. A month ago, Anthropic did the same with its Claude Code. Both companies are already fighting for enterprise customers. If SpaceX manages to buy AI application developer for programmers Cursor, its chatbot Grok will also evolve into an agent and become a prominent alternative.

Google executives are increasingly concerned that the company has begun to fall behind in the AI market for programming, Bloomberg writes. Many engineers in Silicon Valley switch between Claude Code and Codex to see which AI produces better results, but Google often doesn't even figure in that discussion, startup founders told the agency. On Wednesday, in an effort to attract developers, Google said its Gemini Enterprise Agent Platform will get new features, including Memory Bank and Memory Profile, that will help agents remember past interactions with users - a weakness of some early AI tools, Bloomberg explains. Another new feature, Agent Simulation, will allow developers to more thoroughly test these tools before launch.

Google said enterprise customers will be able to use the Gemini Enterprise app, which the company called "the main entry point to AI for every employee," to create agents without a single line of code.

Google also announced the launch of Projects, a collaboration platform for people and agents. According to the company, the tool combines information from sources such as Workspace, Microsoft's OneDrive, and corporate chats to get agents working in the right context.

New AI chips

After several years of releasing chips capable of both training AI models and performing inference tasks, Google will divide these functions between separate processors, CNBC writes. This is another attempt by the company to challenge Nvidia in the AI hardware market, the channel said. Google said on Wednesday that it will take such a step in the eighth generation of its tensor processing unit (TPU 8i). Both chips will hit the market later this year.

"As the number of AI agents grows, we concluded that the community would benefit from chips that are each specialized for model training and maintenance tasks," Amin Wahdat, Google's senior vice president and chief technology officer for AI and infrastructure, wrote in a blog post.

DA Davidson analysts in September valued TPU's business, along with Google's AI group DeepMind, at about $900 billion, CNBC notes.

The use of Google's AI chips is gaining momentum, the TV channel writes. Citadel Securities has created software for quantitative research based on Google TPUs, and all 17 national laboratories of the U.S. Department of Energy use AI co-scientist software built on these chips. Anthropic has committed to using Google TPUs totaling several gigawatts of power.

This article was AI-translated and verified by a human editor